Starting with SQL Server 2014, they added a feature called delayed transaction durability. This is a very interesting concept that could give you some speed improvements in your database. In this post, I will try to explain what this feature is and what some advantages/drawbacks are.

Transaction Log Durability

In 2014, SQL Server gives us the ability to control how the transaction log files are written to the disk. It gives us 2 options. Full transaction durability and Delayed transaction durability.

- Full Transaction Durability – This is the default level. This means that as soon as a SQL transaction is executed, the transaction log is written to disk BEFORE control is sent back to the client/user.

- Delayed Transaction Durability – This setting means that the SQL transactions will be written to a memory buffer instead of the disk. The control is then sent back to the client/user after writing to the memory buffer.

Advantages

The delayed transaction durability has a few distinct advantage over full transaction durability.

The first advantage is that it doesn’t need to write to disk before giving control back to the client. In many databases, writing to the disk provides a lot of contention. Log file writes to the disk can sometimes slow queries down quite a bit. Writing to memory is much faster and should speed up your queries in general if your IO to the disk is slow.

Another advantage is that the delayed transaction durability buffers the writes to disk. SQL Server can get optimizations by buffering the write operations. Instead of writing each transaction to the disk, it will fill a buffer and then flush that buffer all at once.

Disadvantages

Everything up to this point sounds great, right? Delayed transaction durability comes with one major pitfall. Delayed transaction durability could cause data loss if SQL Server crashes. The data that isn’t written to disk can be lost if SQL crashes.

When Should I Use Delayed Transaction Durability?

Only use delayed transaction durability if you can afford some data loss if SQL Server crashes. If you cannot afford any data loss, do not use delayed transaction durability.

How To Turn On/Off Delayed Transaction Durability

Okay, now that you’re an expert on delayed transaction durability, let’s quickly cover the 3 levels of transaction durability and how to activate them.

There are 3 kinds of transaction durability. Disabled, Allowed, and Forced.

DISABLED – This means delayed transaction durability is turned off. All transaction writes will go to disk and there will be no potential for loss.

FORCED – This means that delayed transaction durability is turned on for every query. All transaction writes will go to memory and there is a potential for data loss.

ALLOWED – This is kinda in the middle. It basically means that you can turn on the delayed transaction durability in the query or stored procedure.

You will configure the durability with one of these statements:

ALTER DATABASE zoobase SET DELAYED_DURABILITY = DISABLED

GO

ALTER DATABASE zoobase SET DELAYED_DURABILITY = FORCED

GO

ALTER DATABASE zoobase SET DELAYED_DURABILITY = ALLOWED

If you use ALLOWED, you will need to add this hint to your commit transaction in in your sql query or stored procedure to activate the delayed transaction durability for it:

BEGIN TRAN

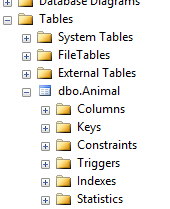

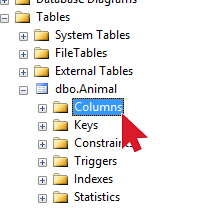

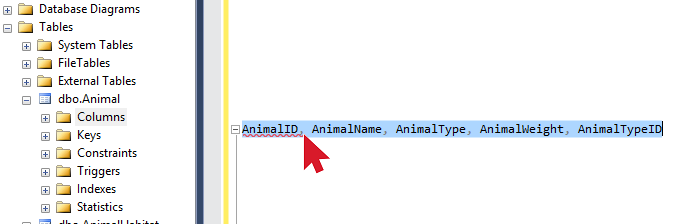

UPDATE Animal

SET AnimalName = 'Cow'

WHERE AnimalName = 'Cattle'

COMMIT TRAN WITH (DELAYED_DURABILITY = ON)

Reference: https://msdn.microsoft.com/en-us/library/dn449490.aspx

NOTE: SQL Server shutdown/reboots are handled the same way as a crash. Make sure you flush your transaction buffer before rebooting!